CHAPTER SEVEN

Skolemization

Suppose that

∃x Ax

is true in a model M: so there exists an element u of U such that Au is true. Now consider the formula

Ax .

Is this true in M? Not necessarily: the constant x may not be defined in M. (After all, M is 'tailored' to the first formula, the existential one.) Even if it were defined, x might well not refer to a U-element that made Ax true. What we can say is this:

if ∃x Ax is true in M,

then there exists a model M' in which Ax is true

- that model, namely, which is the same as M except that it has a constant x which refers to u, the U-element which has Val[Au] = T in M. If by any chance x already occurs as a constant somewhere in the formula A, then just invent a new constant not so far heard-of. The converse is easier:

if Ax is true in N, then so is ∃x Ax.

Putting the two statements together, we have:

there exists a model in which ∃x Ax is true

IFF there exists a model in which Ax is true (*)

from which we may deduce (by 'logical transposition')

there is no model in which ∃x Ax is true

IFF there is no model in which Ax is true

and thence

∃x Ax is false in all models IFF Ax is false in all models

which we may transcribe as

( Πx (Ax) ) = O IFF Ax = O

or,

Πx (Ax) = _ IFF Ax = O .

Let us check that this still makes sense. The left-hand statement says: in all models, every single instance of Ax has the value O. The right-hand statement says: in all models, to whichever U-element the constant x is linked, Ax has the value O. OK?

(Recall that A here stands for a formula, not necessarily an atomic formula. In fact an atomic formula could never make either statement true, for in such a case there would be plenty of models which simply assigned the value _ to some Ax. But if Ax stood for the composite formula Px(Px), then both statements would be true, however value was assigned to the atomic instances Px.)

Suppose we say that a formula E is satisfiable iff there exists a model M such that

E =M _ .

Then, backtracking to the statement marked (*) above, we may say that the formulae ∃x Ax and Ax are interchangeable so far as satisfiability is concerned; and we may write

∃x Ax ≈ Ax

to represent this state of affairs. Transcribing into Laws of Form notation,

(Πx (Ax)) ≈ Ax,

or, letting B stand for not-A,

(Πx Bx) ≈ (Bx).

Pitfall 7.1

A≈B does not imply (A)≈(B). The first statement says, there is a model M such that A=M _ iff there is a model N such that B=N _ . The second says, there is a model M such that A=M O iff there is a model N such that B=N O . These are two different claims.

Lemma 7.2

If a wff W has the form A (Πx Bx), and if A contains no top-level Pi-operators (that is, no Pi-operators whose scope is the whole expression including (Πx Bx) ), then

W ≈ A (Bx).

Proof

If W is satisfied by M, then so are A and (Πx Bx) individually; and A and (Bx) are both satisfied by M', which differs from M only in the addition of a constant x chosen so that Bx = O. Then A(Bx) is also satisfied by M'. Going the other way, if A(Bx) is satisfied by N, then so is W ◊

Corollary 7.3

Suppose A in the lemma has the form (Πy Cy), then

(Πy Cy)(Πx Bx) ≈ (Πy Cy)(Bx) ≈ (Cy)(Bx) .

Thus the operation of Skolemization - as we shall call it - may be performed on each eligible factor of a wff, separately. The operation consists of removing a Pi-operator from a space of depth one, and, by way of compensation, replacing the corresponding variable, x say, wherever it occurs in the scope-formula of Πx, by a constant x.

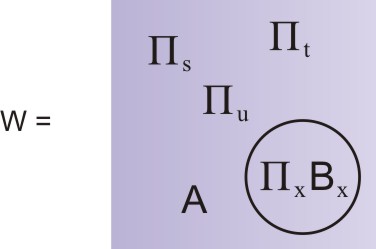

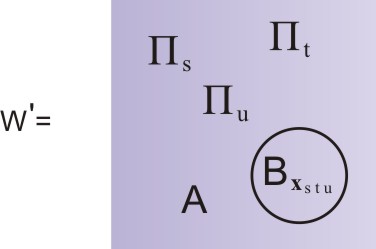

We have not yet mentioned a major complication, with far-reaching consequences for our endeavour. What if, contrary to the conditions of Lemma 7.2, the formula A ('the rest of the wff') does contain some top-level Pi-operators? (By top-level we mean that they are not buried within at least one circle.) By Corollary 6.3 their scope must be understood to be the whole of W. Suppose the corresponding variables are s, t, ... , u, so W looks something like this:

Now, if W is to be satisfied by some model M, then the value of the formula (Πx Bx) must be _ under each and every instantiation of the variables s, t, ..., u by U-elements. In other words, each and every instantiation of (Πx Bx) has to be satisfied by M. It is really quite difficult to give satisfaction to W! (This is all assuming that B actually involves s, t, ..., u; if it doesn't then each of these instantiations is exactly the same and they don't really need to be considered separately.) Now, each time (Πx Bx) is satisfied, it gives rise to an instance x which as it were 'does the satisfying' (i.e. is such that (Bx)=_); but supposing that B depends on the instantiation of s, t, ..., u, so will x. The same x will not do the trick each time. Thus the 'constant' x is really a function of s, t, ... u. But this turns out to be no obstacle to Skolemization. For the x that is a function of s, t, ...u we write

xst...u

and for W', the Skolemized version of W, we have

Now, we claim that if W is satisified by a model M, then there exists a model M' which satisifes W' (and vice versa). But for the claim to even make sense we need to make sure that we understand what is meant by a model for W'. How do we deal with that formula

Bxst...u ?

All that is needed is that we require our model, instead of specifying a once-and-for-all reference for the constant x, to specify a function x(s, t, ...u); that is, for each instantiation s, t, ...u of s, t, ...u the model has to direct us to the U-element

xst...u .

Because the model must already specify the value of B under any instantiation of its variables, we do not have to make any provision specially for the value of terms like

Bxst...u

or indeed

(Bxst...u) .

It is values like these which are need for the evaluation of W'. Now, if W is satisfied by M, then for each instantiation of s, t, ...u (as s, t, ...u) there exists an x, an element of U, which makes Bx take the value O. This determines the U-element to which the function x(s, t, ...u) must point, in M', when s, t, ...u are instantiated as s, t, ...u.

As usual, the 'vice versa' part is easy, as the model that does for W' will also do for W. We have thus established

Lemma 7.4

With W and W' defined as above,

W ≈ W' ◊

Thus, Skolemization allows us to get rid of any Pi-operators which are situated at level -1 in our wff. But this will allow other Pi-operators to rise up towards the surface, if there are any at lower levels. Thus a Pi that was at level -2, but blocked by a Pi at level -1, will now be able to rise up to level 0 - into the light of day, as it were. This in turn may have repercussions at lower levels. The process of bubbling up may be allowed to continue until it is once again blocked (by Pi's stuck in level -1). Then we have another round of Skolemization, to get rid of the 'blocking' Pi's from level -1. Note that by this time, other Pi's may well have risen to the top level: their variables will have to be included in the subscripts of any further 'constant-functions' that are introduced by Skolemization.

The whole process (bubbling up and Skolemization) may now be allowed to continue until all remaining Pi-operators are in the top level, that is, in the outer space of the wff. Roughly half the original variables can be expected to live on as variables in the final Skolemized expression; the other half will have been converted into constant-functions. Perhaps 'subscripted constants' would be a slightly less confusing term?

Let us denote the final expression Wsk. W and Wsk are interchangeable so far as satisfiability is concerned. If one is satisfiable, so is the other.

Example 7.5

By way of a simple example, let us take the two wffs involved in Pitfall 6.5, at the end of the last chapter. These were

Under the action of Skolemization, these become

respectively. The second of these is satisfied by the natural numbers, with Axy meaning 'y is the next number after x', and yx always signifying 'the next number after x'. The first is satisfied by the natural numbers, with Axy meaning 'x is greater than or equal to y', and y signifying the number 1.